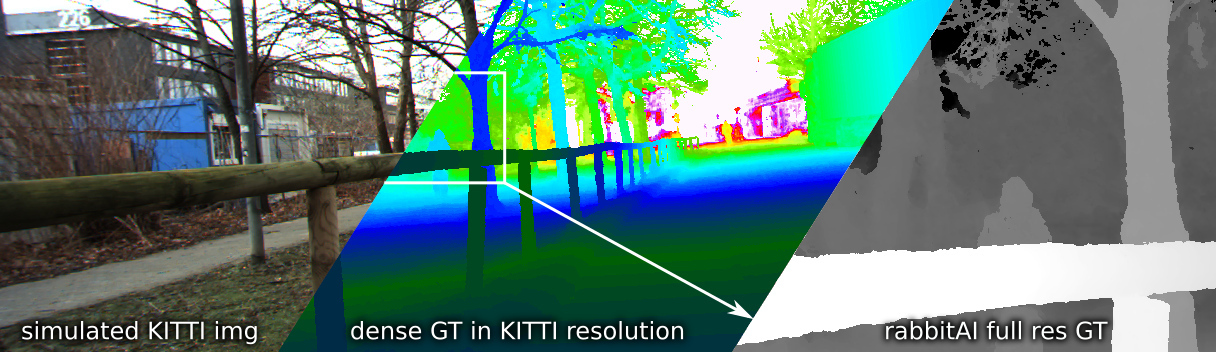

Welcome to the rabbitAI depth prediction benchmark. Please see our paper for a detailed description.

News

- 2021-11-25 Deactivated benchmark upload. Contact us if you are still interested in the data.

- 2020-08-17 MiDaS RVC baseline was updated with author provided results, metrics have changed due to better choice of scaling

- 2020-08-11 Added Baseline Method for the RVC Challenge: BTSREF_RVC

- 2020-07-06 We have added another pretrained reference method: MiDaS

2020-06-14 Submissions are now open: submit your algorithm or result2020-04-08 Training data is now available- 2020-04-06 Our paper has been accepted to SAIAD 2020 (paper/bibtex)

- 2020-04-03 We fixed a bug in the accounting of all metrics, results have changed slightly, bump30 changed significantly

- 2020-03-22 Public leaderboard is online

Leaderboard

Public leaderboard, sorted by Avg30. Click on the method name to see detailed results for the method.

| Network | avg30 | miss30 | fake30 | missSt30 | fakeSt30 | bump30 | Avg ScaleError | Avg Offset [m] | silog [%] | sq_rel [%] | abs_rel [%] | rmse_inv [1/km] |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| SemiDepth | 0.26 | 0.37 | 0.45 | 0.20 | 0.08 | 0.18 | 0.96 | 2.58 | 38.58 | 26.35 | 26.56 | 38.54 |

| RMDP_RVC | 0.27 | 0.41 | 0.18 | 0.14 | 0.14 | 0.47 | 1.05 | 1.21 | 33.17 | 23.5 | 25.12 | 29.68 |

| MiDaS | 0.30 | 0.55 | 0.31 | 0.16 | 0.04 | 0.43 | 0.88 | 1.82 | 35.6 | 24.12 | 29.71 | 35.79 |

| DenseDepth | 0.32 | 0.53 | 0.41 | 0.25 | 0.04 | 0.35 | 1.20 | 3.88 | 38.26 | 13.5 | 24.94 | 35.5 |

| BTSREF_RVC | 0.35 | 0.41 | 0.65 | 0.18 | 0.25 | 0.25 | 1.06 | 1.57 | 33.77 | 19.12 | 24.08 | 30.02 |

| packnSFMHR_RVC | 0.42 | 0.41 | 0.71 | 0.24 | 0.31 | 0.43 | 0.96 | 2.49 | 37.94 | 17.82 | 25.97 | 38.53 |

| MonoResMatch | 0.46 | 0.27 | 0.82 | 0.24 | 0.43 | 0.55 | 1.03 | 3.25 | 43.33 | 21.78 | 29.2 | 48.49 |

| BTS | 0.51 | 0.25 | 1.00 | 0.22 | 0.92 | 0.14 | 0.94 | 2.42 | 51.09 | 15.91 | 27.38 | 50.41 |

Distance Metrics

The following plots evalute our interpretable metrics over a range from 3 to 100 meters. See the the paper for details. Note that all metrics only operate in the drivable corridor up to 2m above the street surface.

Miss

The Miss metric detects obstacles (on and off-street) that are missing in the algorithm result. For example parked cars, trees, barriers, bollards, buildings.

Fake

The fake metric detects obstacles (on and off-street) that are hallucinated in places which should be empty (and hence drivable).

MissSt

Same as Miss, but restricted to obstacles directly above the visible street surface (e.g. boom gates, branches, side mirrors).

FakeSt

Same as fake, but restricted to the area directly above the visible street surface.